Continuous Delivery of AT Cloud Services

What is Continuous Delivery?

Continuous delivery is the ability to release changes of all kinds on demand quickly, safely, and sustainably. Teams that practice continuous delivery well can release software and make changes to production in a low-risk way at any time-including during normal business hours-without impacting users.

The principles and practices of continuous delivery are universal to any software context, which means they are suitable for any of our products and services.

Teams that practice continuous delivery can answer "yes" to the following questions:

- Is our software in a deployable state throughout its lifecycle?

- Do we prioritize keeping the software deployable over working on new features?

- Is fast feedback on the quality and deployability of the system we are working on available to everyone on the team?

- When we get feedback that the system is not deployable (such as failing builds or tests), do we make fixing these issues our highest priority?

- Can we deploy our system to production, or to end users, at any time, on demand?

This is adapted from DORA.

How does Continuous Delivery Contribute to Organisational Success?

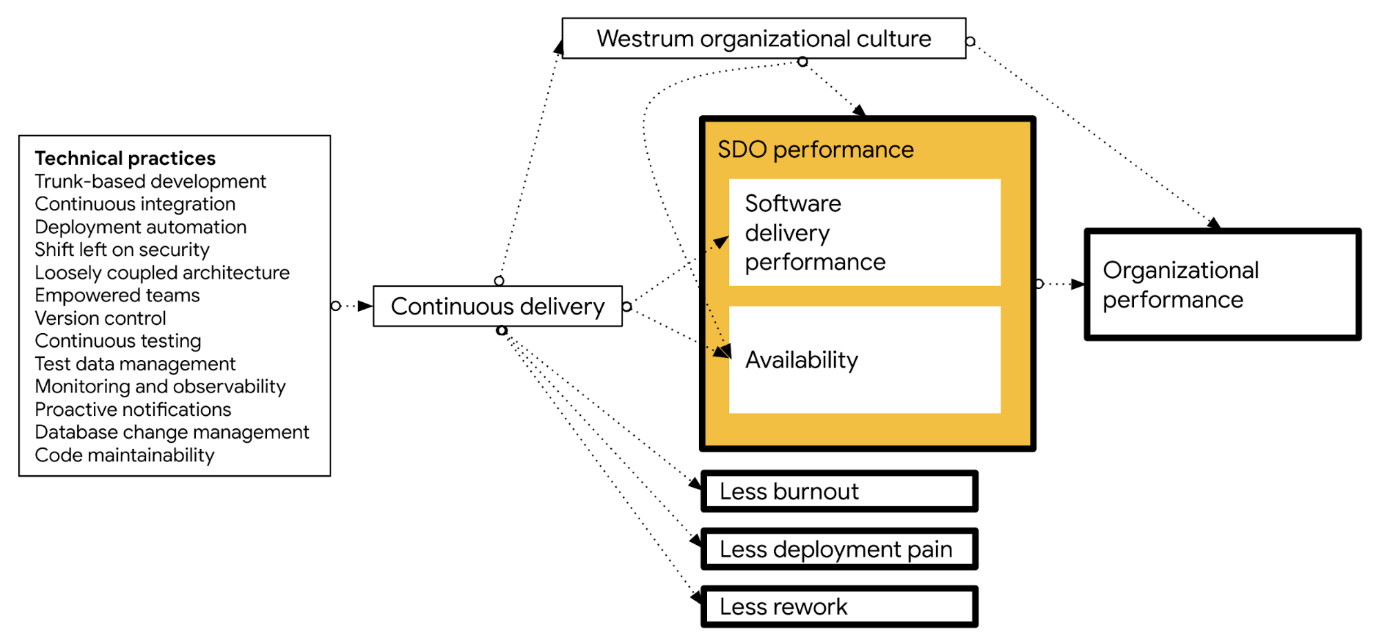

There are significant benefits to organisations that adopt continuous delivery. DORA research shows that doing well at continuous delivery provides the following benefits:

- Improves software delivery performance, measured in terms of the four key metrics, as well as higher levels of availability.

- Leads to higher levels of quality, measured by the percentage of time teams spend on rework or unplanned work (as shown in the 2016 State of DevOps Report (pp. 25-26), and the 2018 State of DevOps Report (pp. 27-29).

- Predicts lower levels of burnout (physical, mental, or emotional exhaustion caused by overwork or stress), higher levels of job satisfaction, and better organizational culture.

- Reduces deployment pain, a measure of the extent to which deployments are disruptive rather than easy and pain-free, as well as the fear and anxiety that engineers and technical staff feel when they push code into production.

- Impacts culture, leading to greater levels of psychological safety and a more mission-driven organizational culture.

The following diagram shows how a set of technical practices impacts continuous delivery, which in turn drives the outcomes listed above:

This is adapted from DORA.

How could Continuous Delivery suit AT Cloud Services?

AT's products have traditionally been software products that are installed on customers' premises. They have had a natural lifecycle of build, test, distribute, with engineering mostly focussed on the building and testing of software that cannot easily be changed once installed.

With services running in the cloud, these natural stages have mostly disappeared, as AT controls the environment where the software runs. This gives AT the freedom to decide exactly when each service changes - down to the feature level.

Continuous Delivery is therefore a natural match for cloud deployment. Instead of delivering a complete product every quarter, continuous delivery moves small chunks of work in a continuous flow from concept to user, with as little interruption in the flow as possible.

Definitions

We use a number of terms in Continuous Delivery, and it is helpful to understand the role each part plays.

At a high level, we can split our system into two parts: the "platform" and the "images". Put simply, the platform enables developers to deploy their software, built from images working together.

The platform also encompasses infrastructure. This involves setting up virtual machines, AWS services and configuration, and creating environments for the software to be deployed into.

The "Platform"

There are many pieces of infrastructure that developers must consider when deploying a system to the cloud. For example, secure communication between software components and the end user. There are also non-functional requirements, like ensuring consistent performance no matter how many users are active, that require effort from engineers.

It is useful to be able to abstract as much of these away from a developer's day-to-day work, so they can concentrate on building features. This abstraction is commonly referred to as the "platform".

The team responsible for the platform is responsible for the Continuous Delivery infrastructure (as well as other things). This infrastructure should handle the deployment of application images into the different environments we have, including staging and production. The infrastructure enforces deployment standards, e.g. via automated tests, and allows the support team to quickly roll back changes if they cause unexpected issues.

There are open source software tools available for this. For example, OpenTofu is a tool to set up your environment in external providers (e.g. public cloud providers like AWS) without manual steps; ArgoCD is a tool to deploy software into an environment. Both of these tools require declarative configuration, which means users get both a human- and machine-readable representation of their environment that can be stored in version control.

Storing declarative configuration in version control and using a tool to apply changes is part of a deployment technique called "Gitops". Gitops is an important counterpart of continuous delivery, allowing organizations to deploy quickly and improving the developer experience.

The "Images"

The platform provides an environment for software to run. The software that runs in it is a set of containers, built from images. In Kubernetes parlance, pods are made up of one or more containers. Each container references one image.

A set of images working together form the overall application, e.g. OneConnect.

The teams that are responsible for these images have autonomy over the code that runs in the pod, up until the point where it interacts with other images. At that point they must engage with the other development teams, including the platform team, to ensure best practices are being followed.

This gives teams a large amount of freedom to get their features implemented without adversely impacting the wider solution. For example, they can choose whatever language they like, but they cannot use a different API mechanism (e.g. REST/GraphQL/GNMI) without discussing it with the wider engineering group beforehand.

Images must be loosely coupled to other images for continuous delivery to succeed. That is, it should not be required that more than one image is deployed at the same time for a successful deployment; such coupling would mean each image is no longer in control of its own lifecycle and would need to be treated specially in each deployment. See A loosely-coupled architecture in Architecture of AT Cloud Services for more information.

Deployment Lifecycles

Application deployment can happen frequently, perhaps multiple times a day. Infrastructure provisioning would happen much less frequently, perhaps once a month.

One instance of the environment typically hosts multiple application instances at different versions, e.g. dev/test/prod.

Further Reading

More information about the split of platform and images is available on the Argo CD website.

Continuous Integration vs Continuous Delivery

The split of images and platform makes it easy to understand the difference between Continuous Integration and Continuous Delivery. Where CI is designed to produce high quality images, CD is designed to produce a high quality deployment process.

Producing high quality images is a prerequisite of CD. This means that we use CI to automatically test images as much as is practical before they go into the CD pipeline. Whilst it is a natural temptation to rely on tests that test the whole system works together properly (end-to-end tests), these tests are slow, expensive to run and are hard to debug when they fail. Testing as early as is practical in the process means we can minimise the expensive integration testing step as much as possible to save time and money.

The manifests that we write to run the images in Kubernetes can only really be tested in a production-like environment, e.g. AWS. Using CD means we can deploy to staging environment using the same configuration as production, thereby exposing any bugs in our manifest files there.

These topics are covered in more detail in Best Practices for Testing in a CD Environment.

Code Structure

Images

Each image belongs in its own repository. Each repository is responsible for building, testing and publishing the image to the local container registry. It can do this via any means required; for example in New Zealand we use Jenkins to build and test and Harbor as the container registry. The Harbor registry mirrors images under the oneconnect namespace to the Amazon ECR registry.

In New Zealand, the image repostories live on the ATLNZ Gerrit Server using the following structure:

cloud/

├── applications/

│ ├── app1.git # Code + pipeline to build app1 image

│ ├── app2.git # Code + pipeline to build app2 image

│ └── ... # More app repos

Manifests

Manifests live together, grouped together by their deployment. Manifests are stored in Github as we need the cloud instances of ArgoCD to be able to see them. Whilst image repositories may also have a manifest, this is only used for automated tests and is not used for deployment.

Benefits

- Individual images are small with limited responsibilities.

- It allows for separate life cycles:

- Day-One infrastructure deployment - AWS Accounts, Secrets Management Infra

- Day-N infrastructure deployment - Pipeline for building AWS Resources

- VPC, EKS Cluster, Supporting infrastructure

- ArgoCD Applications - Logical separation and lifecycle management of applications

- OneConnect application

- NATs Server

- ...

- One-Connect Application - Kubernetes manifests for One Connect App

- Encapsulated/abstracted in 'ArgoCD Application 'One Connect'

- Allows for multiple deployments at different versions with overlays (Via Kustomize)

Environments and Deployments

We use ArgoCD to deploy manifests to our environments, and Terraform to set up the environments. There is one ArgoCD service per environment, which in production means there is one per region. Currently we only deploy one instance per environment.

All OneConnect infrastructure is currently deployed using the following projects / repositories:

oc-infrastructure: Repository for deploying Terraform projects.argocd-oc-system: Repository for deploying system apps within Kubernetes.argocd-oc-apps: Repository for deploying OneConnect customer-facing apps within Kubernetes.

More information is available in the infrastructure overview document.